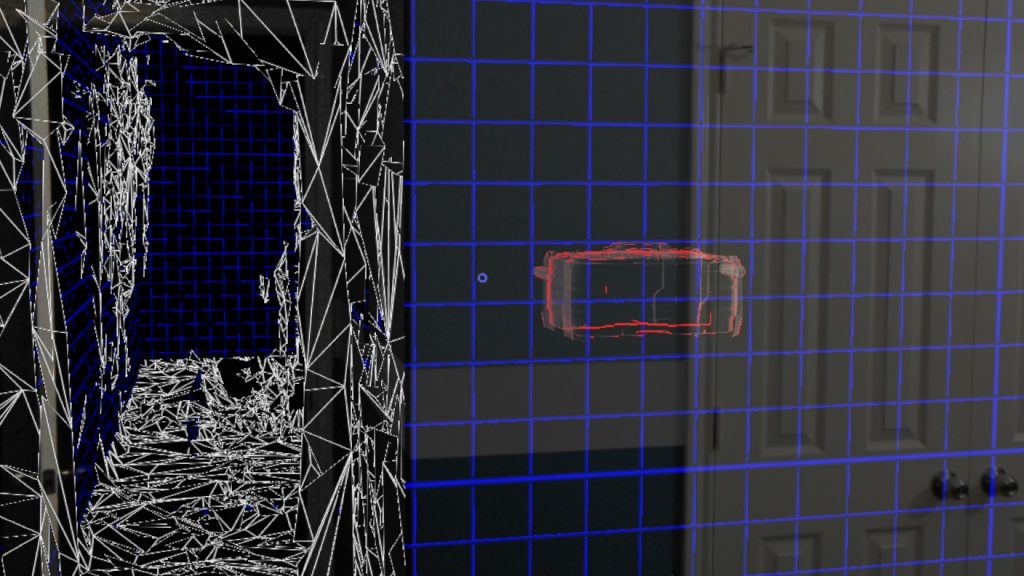

Back in April I got my hands on one of the first HoloLens dev kits. Since then I’ve been playing around and learning the new mechanics behind programming for Mixed Reality technology. There are a lot of new aspects to the HoloLens hardware that is new to the programming community. One aspect in-particular is that the HoloLens generates this beautiful and fairly accurate mesh from the environment around you. The only problem is that this mesh can have overlapping polygons, holes, and jagged edges. Also the raw mesh alone doesn’t give you any specifically useful information about the parts of the mesh. It doesn’t tell you what parts of the mesh are walls, floors, ceiling, tables, etc. The HoloLens just sees it as one huge object/mesh.

Even though the raw mesh doesn’t provide you with any useful information, Microsoft does provide the HoloToolKit. Which does have a tool to do some mesh processing. However, I found the information calculated by the HoloToolKit to be very broad and generic. For instance, it will give you a list of planes and tell you if the planes are walls, floors, ceilings, or tables. Although the planes that I received when I ran the tool were patchy, didn’t match the full lengths of the walls, and on some occasions extended past the plane length. This became extremely annoying for my two-story house with a loft. Where it kept trying to separate the two floors in-half with a giant plane through the middle of my house. These planes are not accurate enough to use for real world physics calculations without some object falling through the map. But these planes can be used to find a basic blank spot on a wall to put a hologram (Like say an alien invading mother-ship… RoboRaid).

With that said, depending on the app you are developing you may not have as a specific need as I do and can use the HoloToolKit. However, for those of us who need a little more information about our surroundings. We are at the moment required to develop our own mesh processor.

For my first implementation I started out with a grid based approach. Converting the distributed vertices of the mesh into a three-dimensional grid that I could use to calculate my information. However, as I soon learned there are a lot of mistakes with trying this approach. For starters, the HoloLens set’s its World Origin at what ever direction it is pointed at the time of the start of the app. Which made this grid based approach give all sorts of incorrect data when the grid wasn’t aligned with the walls in the mesh. Also, parts of the mesh that were at an angle would also suffer from this grid cellular automata approach.

For my second attempt I scratched my entire first attempt and went to a way more complicated and accurate approach. This new approach required me finding the faces of vertices by comparing the distance between the vertices, normals of the vertices, and direction to its neighboring vertices. To make it even smoother I even added a Laplacian smooth filter with and HC-Algorithm. The resulting mesh was much smoother and more accurate. I was even able to find out which vertices where missing neighbors. Which I then use to recognize holes in the mesh and attempt to fill the holes. I will also note, that in my video I mentioned that the smoothing algorithm would take a long time and freeze the mesh. Well I was able to update that algorithm so that it could run in real-time by only running it for the vertices currently being updated and their neighboring vertices.

The next thing I plan to-do, is now that I have information about common faces. I can now use that to find the common planes between the faces. Hopefully, giving me more detailed planes at a much higher fidelity.